AI is changing Microsoft 365 security: 2025 forecasts and best practices

Table of contents

Tap into Microsoft MVP Jasper Oosterveld’s insights as he unpacks how generative AI is reshaping Microsoft 365 security and how IT teams can stay ahead of the curve.

We’re well into 2025, but it’s never too late to talk trends—especially when it comes to generative AI and its impact on Microsoft 365 security.

Since GPT-4 dropped in 2023, AI tools like Copilot, Google Gemini, and Meta AI have gone mainstream. Employees have welcomed these tools with open arms. We’ve seen this before, though—back in the early 2010s when cloud services like Dropbox hit the scene fast and hard, forcing IT departments to do a full 180 on their strategy and shift from on-prem to the cloud. It was a big change in how businesses approached software and services.

Generative AI feels even bigger. The difference now is that it’s a whole new beast. It’s fast. It’s powerful. And it’s reshaping how we think about data security and compliance in Microsoft 365.

Let’s break down what to expect and what to do about it, across these hot topics:

Generative AI and the rise of shadow AI

Years ago, the term shadow IT popped up when business users were stuck in slow, outdated IT systems. Meanwhile, cloud services like Dropbox and Slack were taking off with features Microsoft just wasn’t offering yet.

I remember talking with many customers during that time who were frustrated. They just wanted better tools to get their work done.

Fast forward to today, and we’re facing the same kind of challenge—but now it’s with generative AI apps. Employees want to work better, move faster. And let’s be clear: you’re not going to stop the AI train. But you can guide the ride.

Here’s how to get started:

1. Communicate first, act later.

Don’t lead with blocking tools. Work with your comms, security, and risk teams to create internal messaging and policies around AI usage. Microsoft has solid templates to get you started—like the Copilot Adoption Playbook.

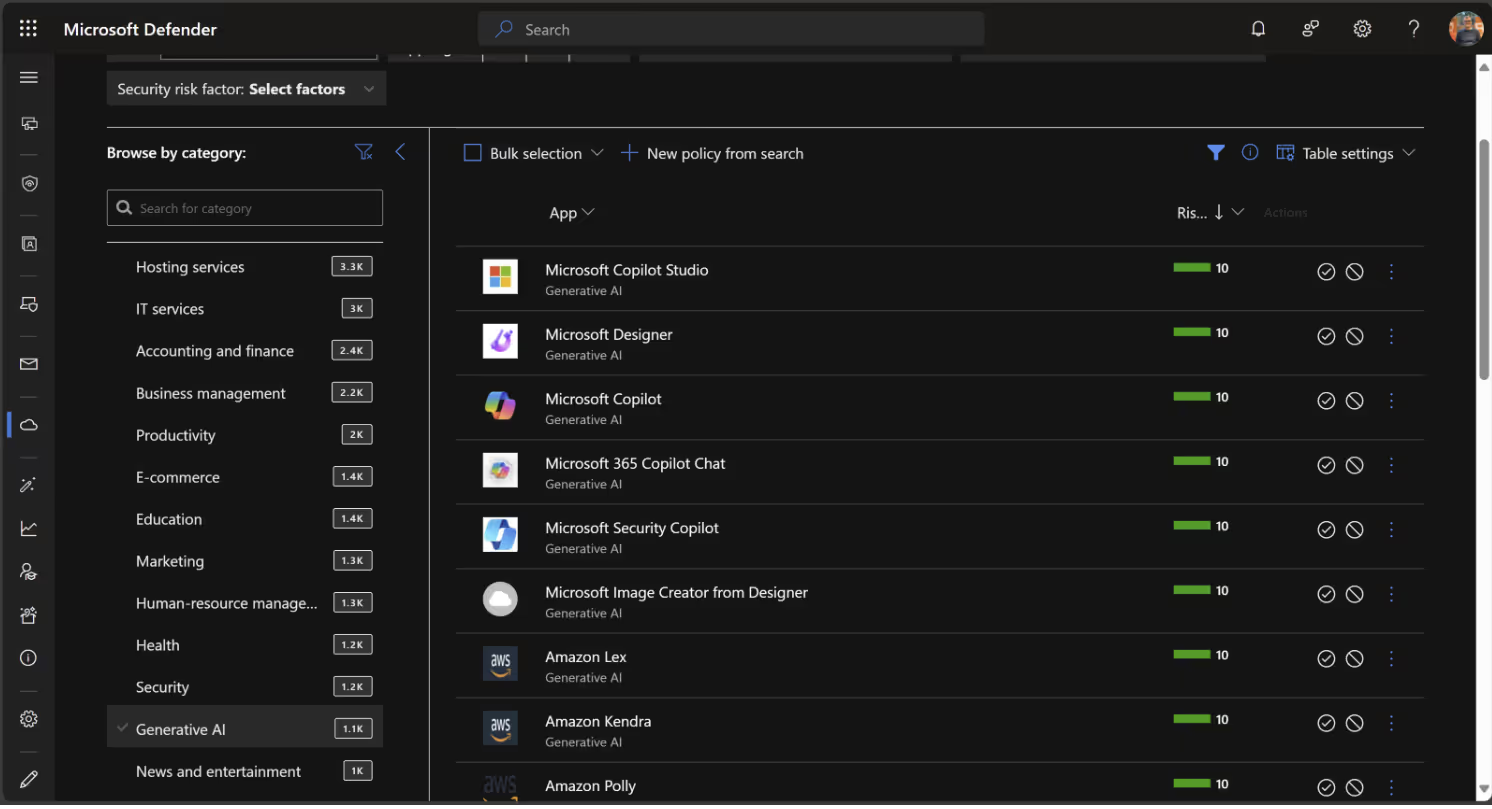

2. Monitor usage with Microsoft Defender for Cloud

Once you’ve set the stage with internal communication, it’s time to look under the hood. Microsoft Defender for Cloud includes a built-in app catalog with a growing list of generative AI apps already being tracked.

You can use it to see which generative AI tools are in use and set up app policies that trigger alerts for new tools. The Data and AI security dashboard (in preview) and AI threat protection are worth a look.

3. Protect sensitive data in Microsoft Purview

Alongside Microsoft Defender for Cloud Apps, make sure you’re keeping an eye on how sensitive information is being used with generative AI tools. That’s where Data Loss Prevention (DLP) in Microsoft Purview comes in.

With DLP, you can track the flow of your organization’s sensitive information types—like credit card numbers, client data, or intellectual property—and catch when that data is being accessed or shared through AI tools. It’s a crucial layer of visibility and protection as AI usage grows across your tenant.

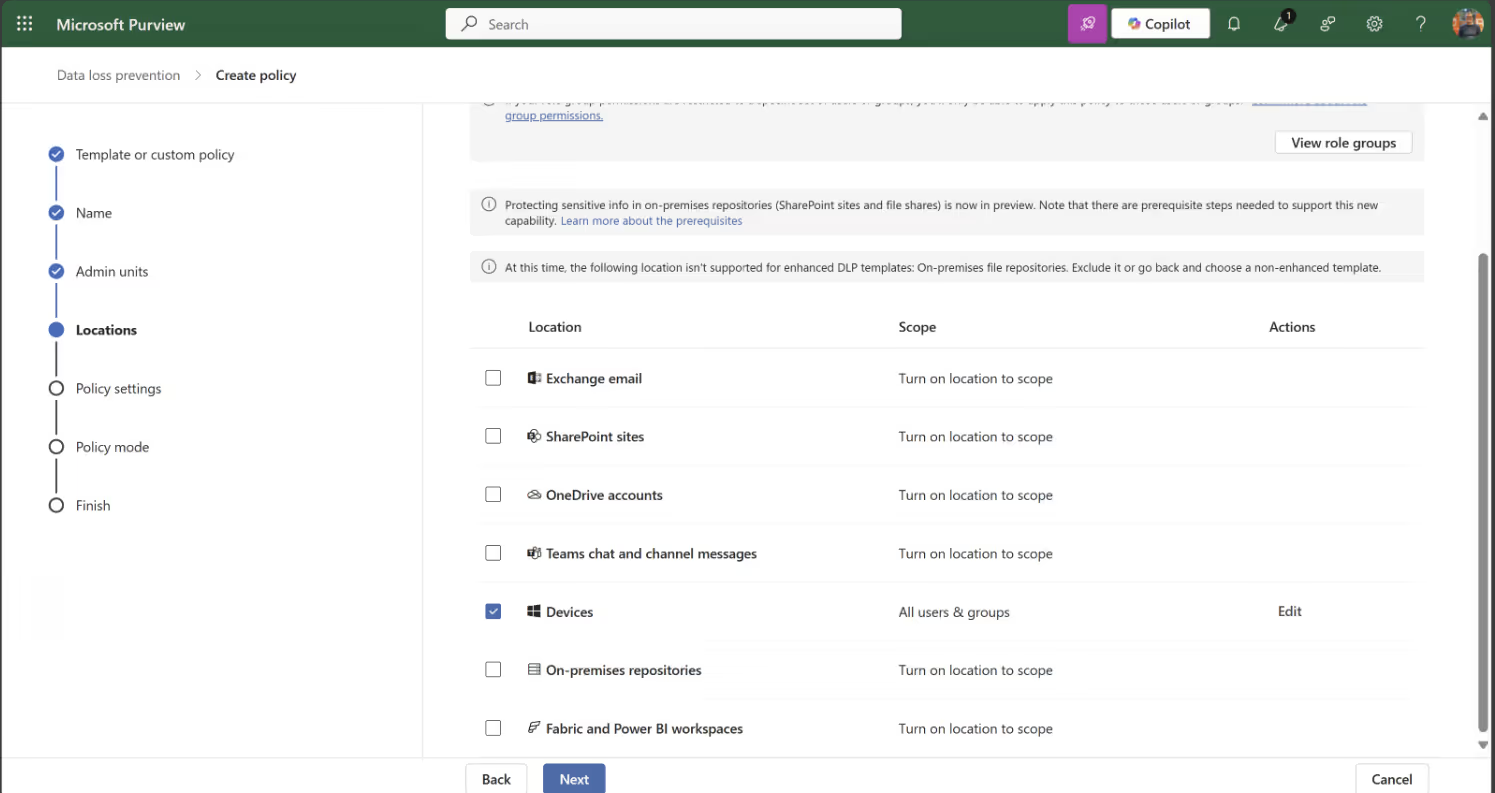

Data Loss Prevention (DLP)

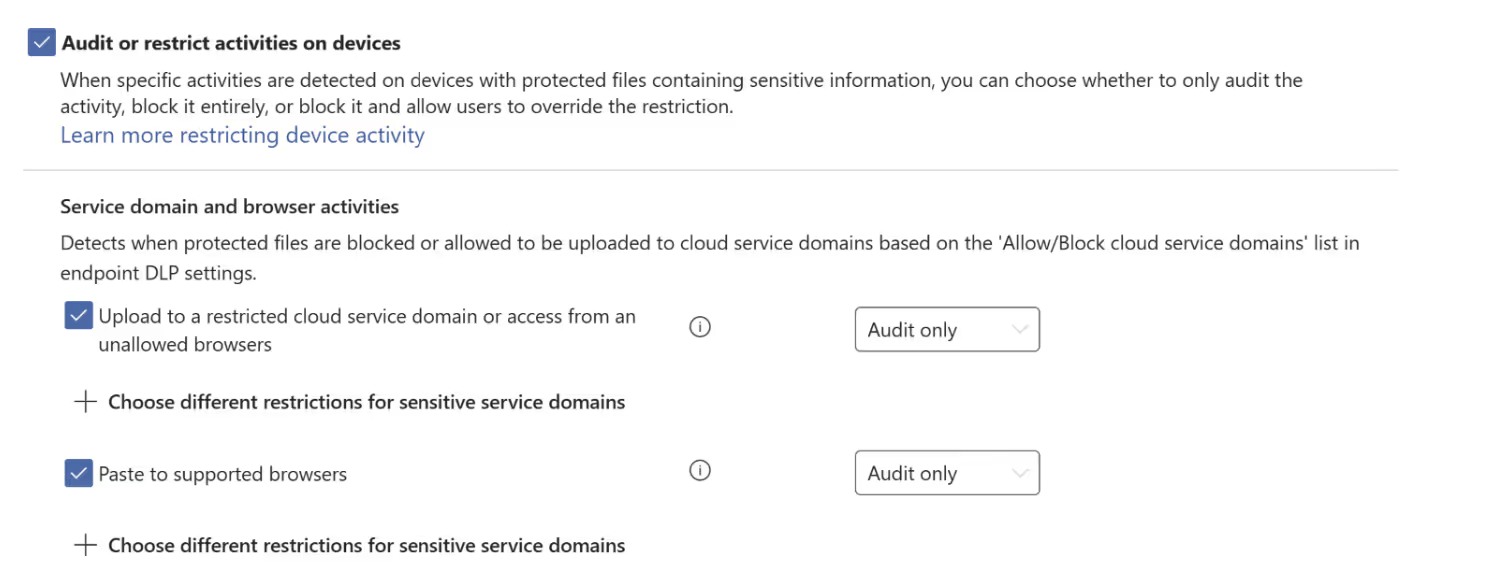

Once your devices are onboarded in Microsoft 365, you can connect them to Microsoft Purview by enabling device onboarding in the settings. With devices onboarded, you’ll be able to create DLP policies to monitor activity. Be sure to select Devices as the location in your policy:

It’s best to start in audit-only mode to monitor behavior without enforcing restrictions right away.

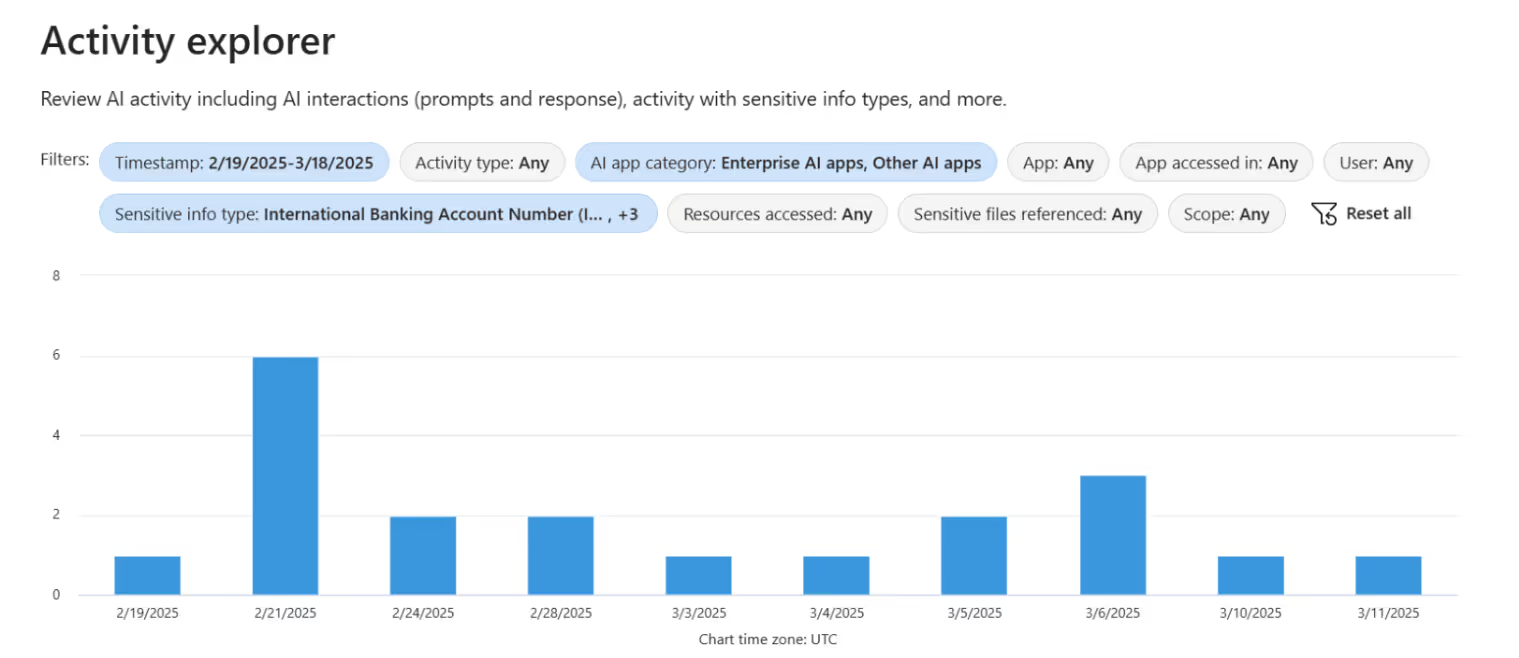

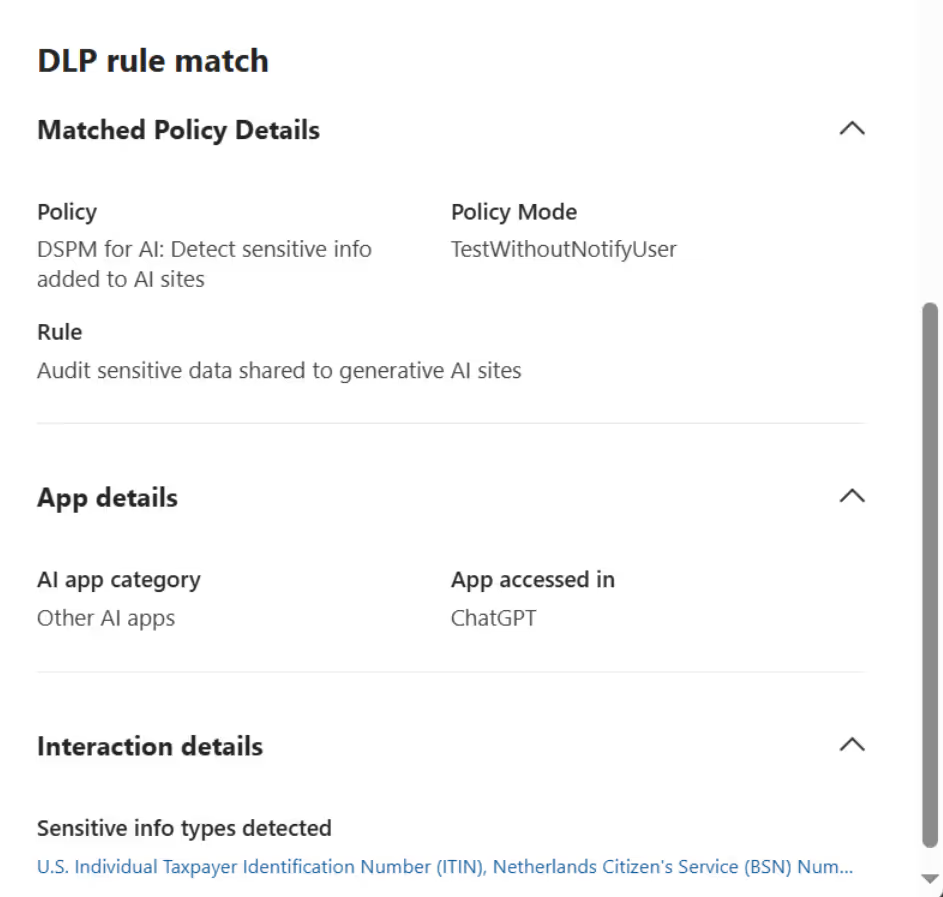

You can view your results in Activity Explorer to get detailed insights into what’s being accessed and by whom. For example:

Data Security Posture Management

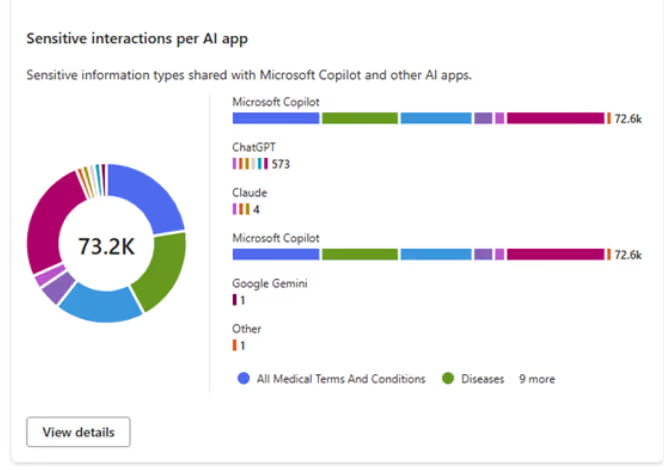

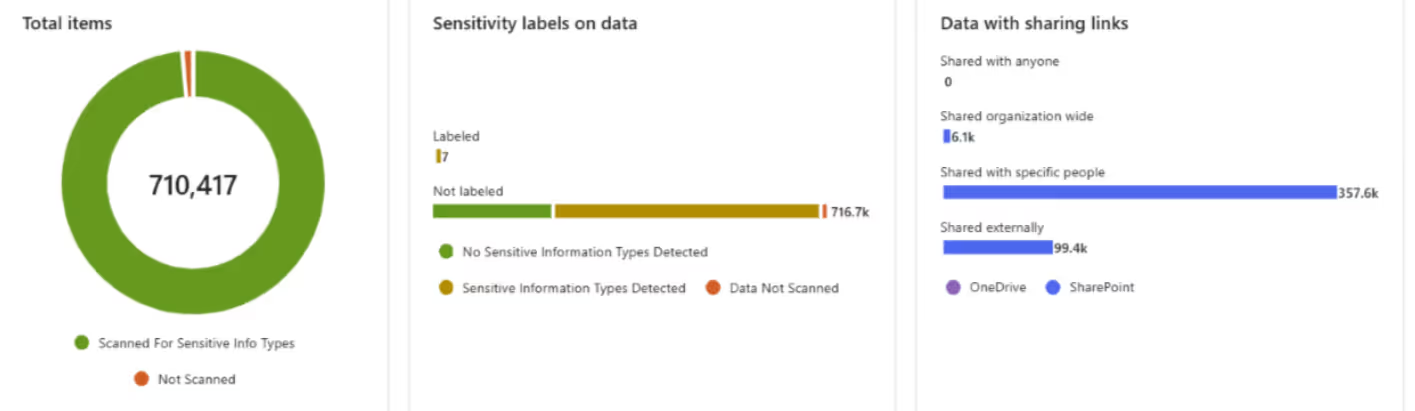

Still in preview, Data Security Posture Management (DSPM) already offers helpful visibility into how AI tools are interacting with your organization’s sensitive info. Here’s a quick look at the kind of insights it can provide:

Heads up: DSPM can surface false positives, so it’s a good idea to validate findings using Microsoft Purview’s Data Explorer.

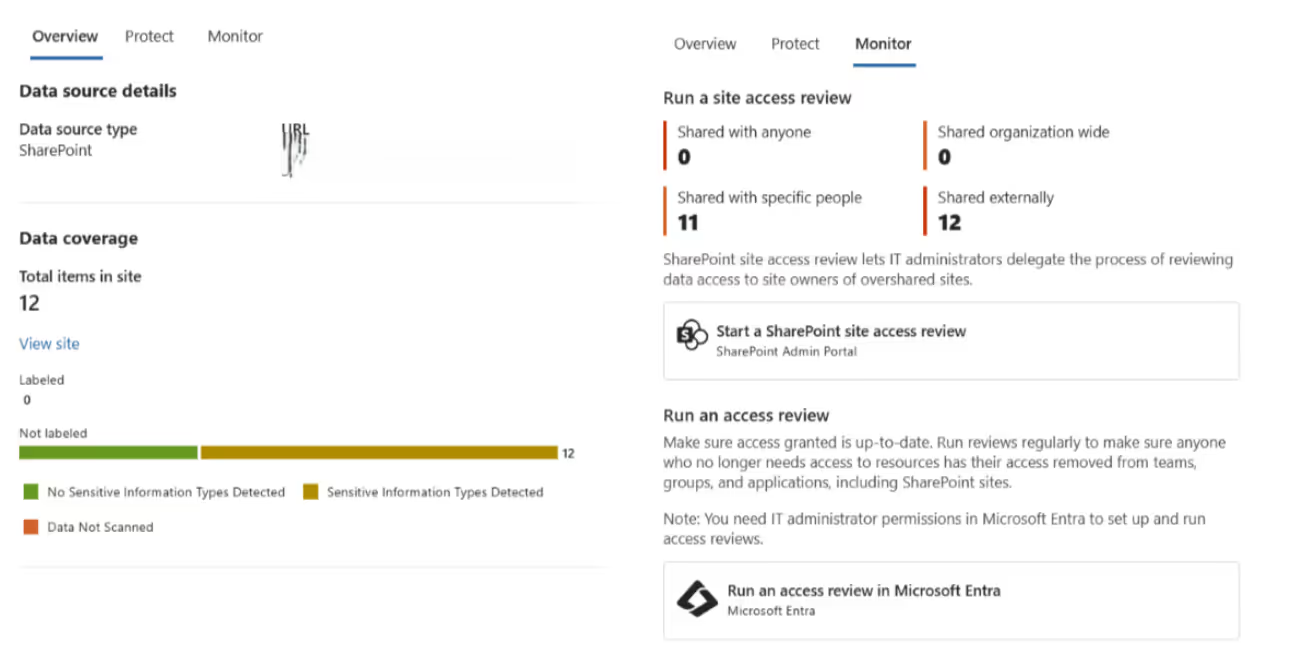

On top of usage insights, DSPM also includes data assessments. These give you a better understanding of your sensitivity labels and the types of sharing links being used across your tenant.

You can drill down by SharePoint site and take action using SharePoint Advanced Management—which, bonus, is now included with Microsoft 365 Copilot licenses.

Regulations and AI: Compliance just got trickier

Let’s talk about regulations. These are rules or directives made and maintained by a government or regulatory body to guide behavior—whether that’s in specific sectors, industries, or society at large.

The goal? Enforce laws, protect the public, ensure safety, maintain standards, and promote fairness. Basically, to stop bad behavior before it causes real harm. And honestly, I fully support them—especially now, with the rise and impact of AI. We need clear guardrails.

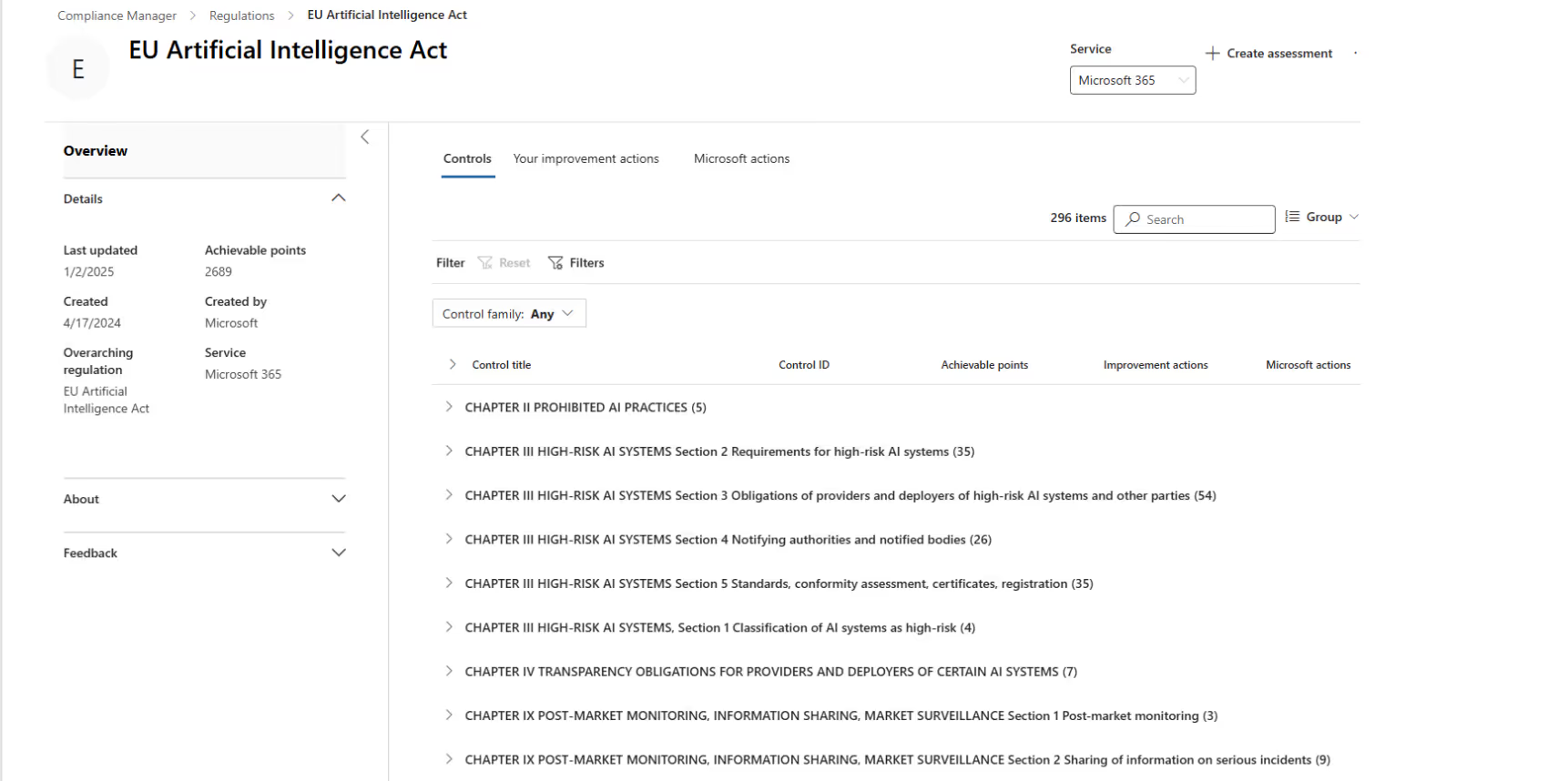

One good example is the EU Artificial Intelligence Act, introduced by the European Union to bring structure and accountability to how AI is used.

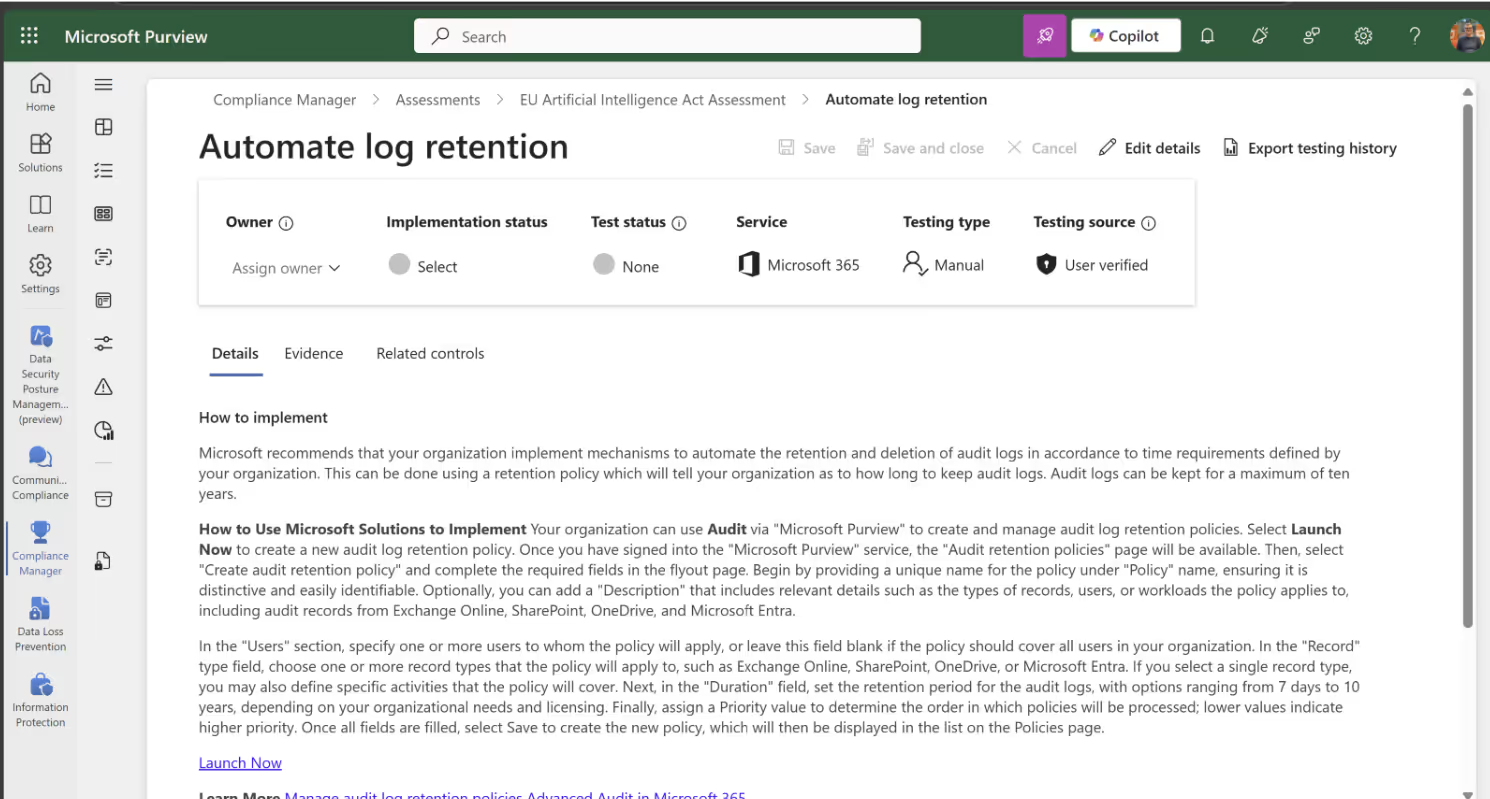

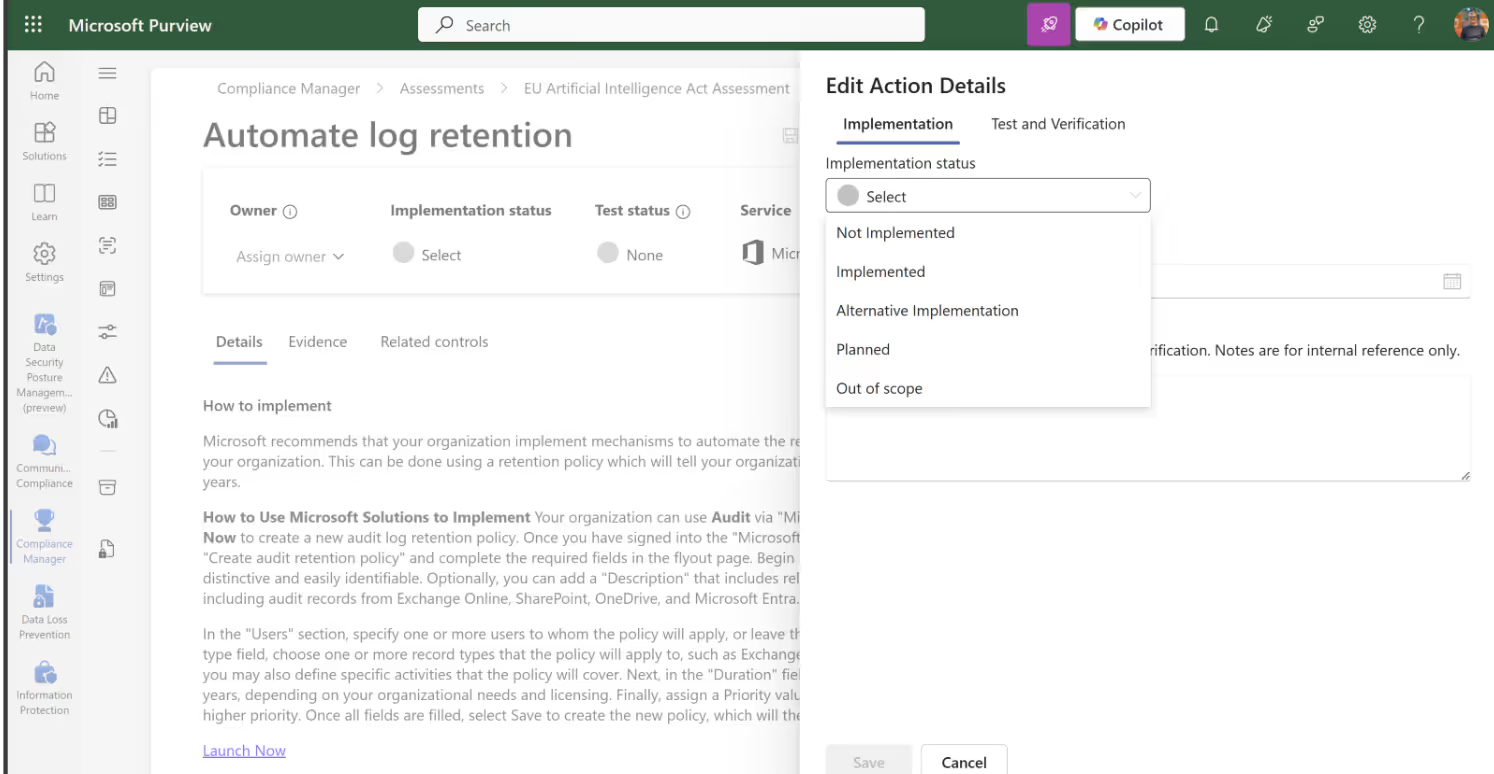

If you’re working in Microsoft 365, you can use Microsoft Purview Compliance Manager to review your tenant against the latest regulations. Just pick an assessment that matches your region and industry.

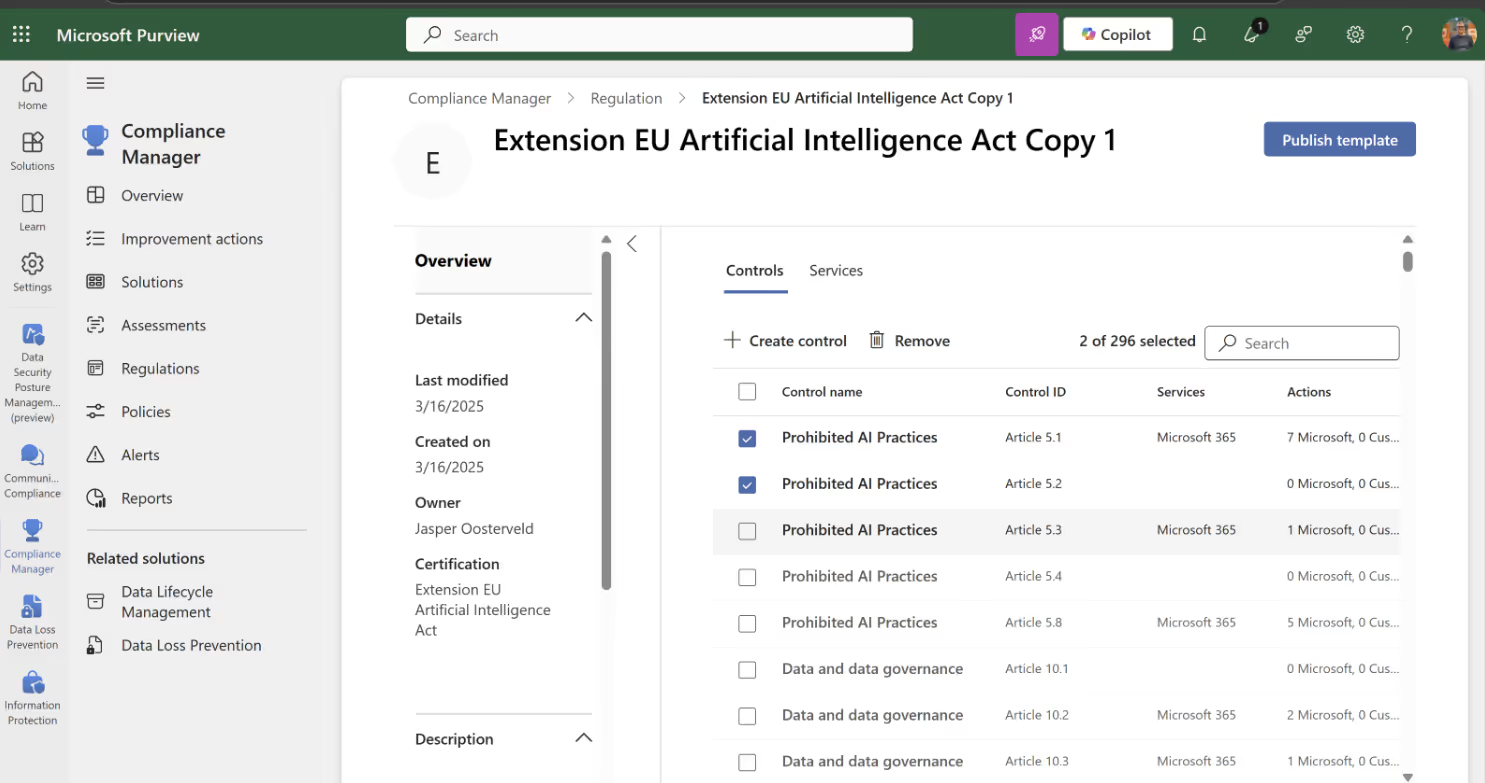

Microsoft recently reintroduced the option to copy an existing regulation and customize it to your needs. Handy, right?

Even better—Purview Compliance Manager gives you improvement actions, complete with explanations and implementation steps, so you’re not guessing what to do next.

You decide what to do with each one—implement it, or mark it as out of scope for your org.

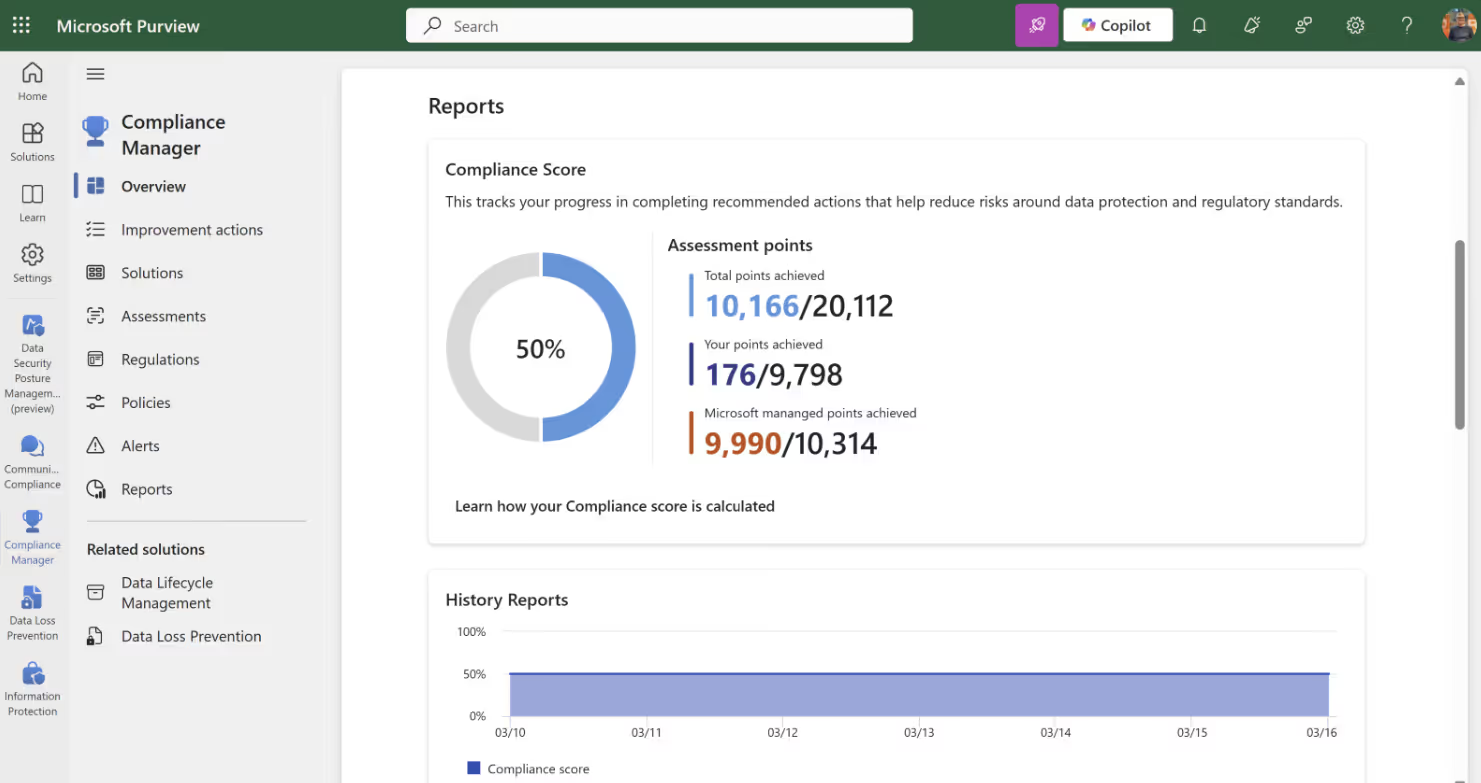

Heads-up: Microsoft won’t make your environment officially compliant. That part’s still up to you and your auditors. But the Compliance Score gives you a quick snapshot of where you stand right from the dashboard.

Balancing innovation and security in Microsoft 365 with Microsoft Purview

I’m skeptical and cautious about the rise of AI—especially when it comes to the potential loss of jobs. But I also see the power of generative AI within organizations.

There are real use cases. AI in meetings is one that stands out to me. It helps with notes, summaries, assigning tasks, and, more importantly, allows people to actually focus on the content of the meeting. The recently introduced Facilitator Agent supports this use case. I’ve seen it in action—it’s incredible.

Employees are also discovering their own use cases, especially with tools like ChatGPT. They’re inspired to innovate their work processes with the help of AI. And you don’t want to block that innovation. You want to encourage and support it. Like I said before, you’re not going to stop this AI train. Your employees will find ways around your security controls. Some might even see it as a challenge.

That’s why Microsoft Purview is important. It plays a key role in making sure AI can be used safely while still protecting your data. Here’s how:

Secure your Microsoft 365 Copilot data

Microsoft Purview has multiple services to help secure your data alongside Microsoft 365 Copilot. We’ve already talked about DLP and DSPM for AI. Now let’s take a closer look at two other important ones.

Microsoft Purview Information Protection (MIP)

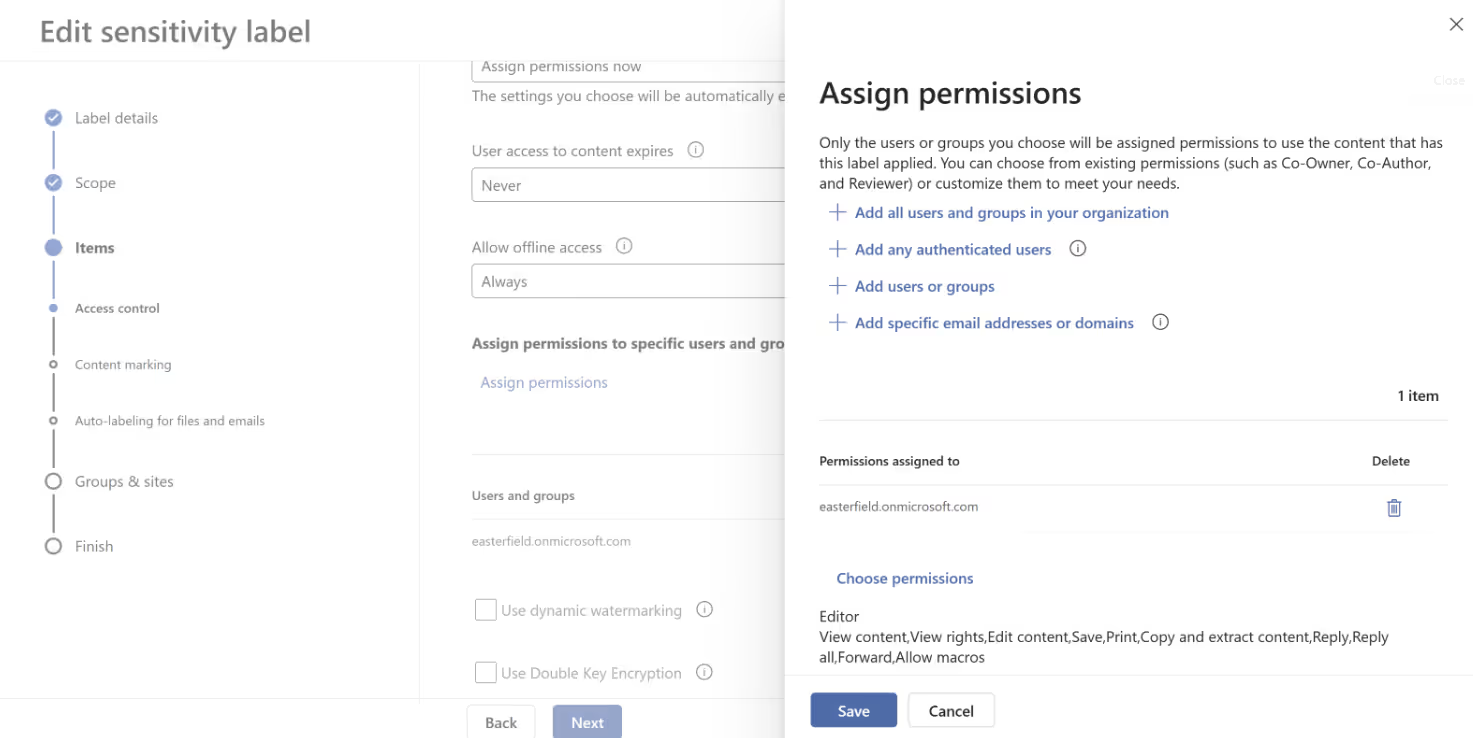

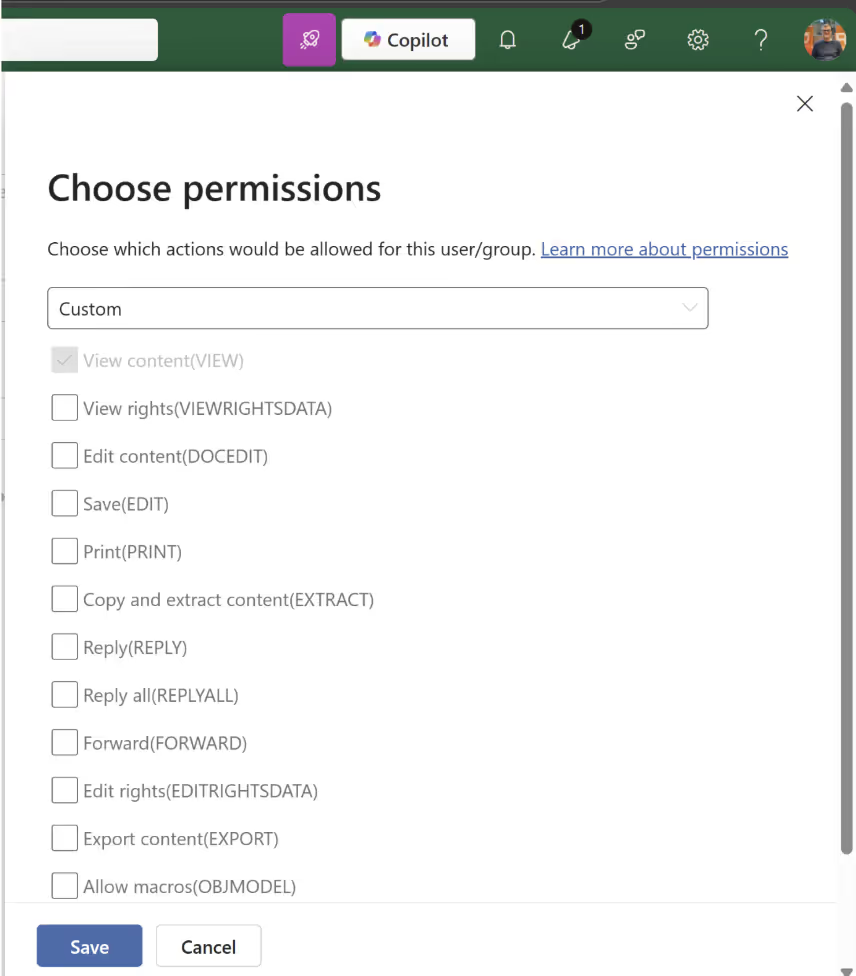

With MIP, you can use sensitivity labels to classify and protect sensitive information. Each label can be tied to specific users or groups with a defined permission level. For example:

If someone doesn’t meet the required permission level, Copilot won’t retrieve the content. You can also disable extract permissions.

Just be aware—this can also block access for users who do have the right permissions.

Communication Compliance

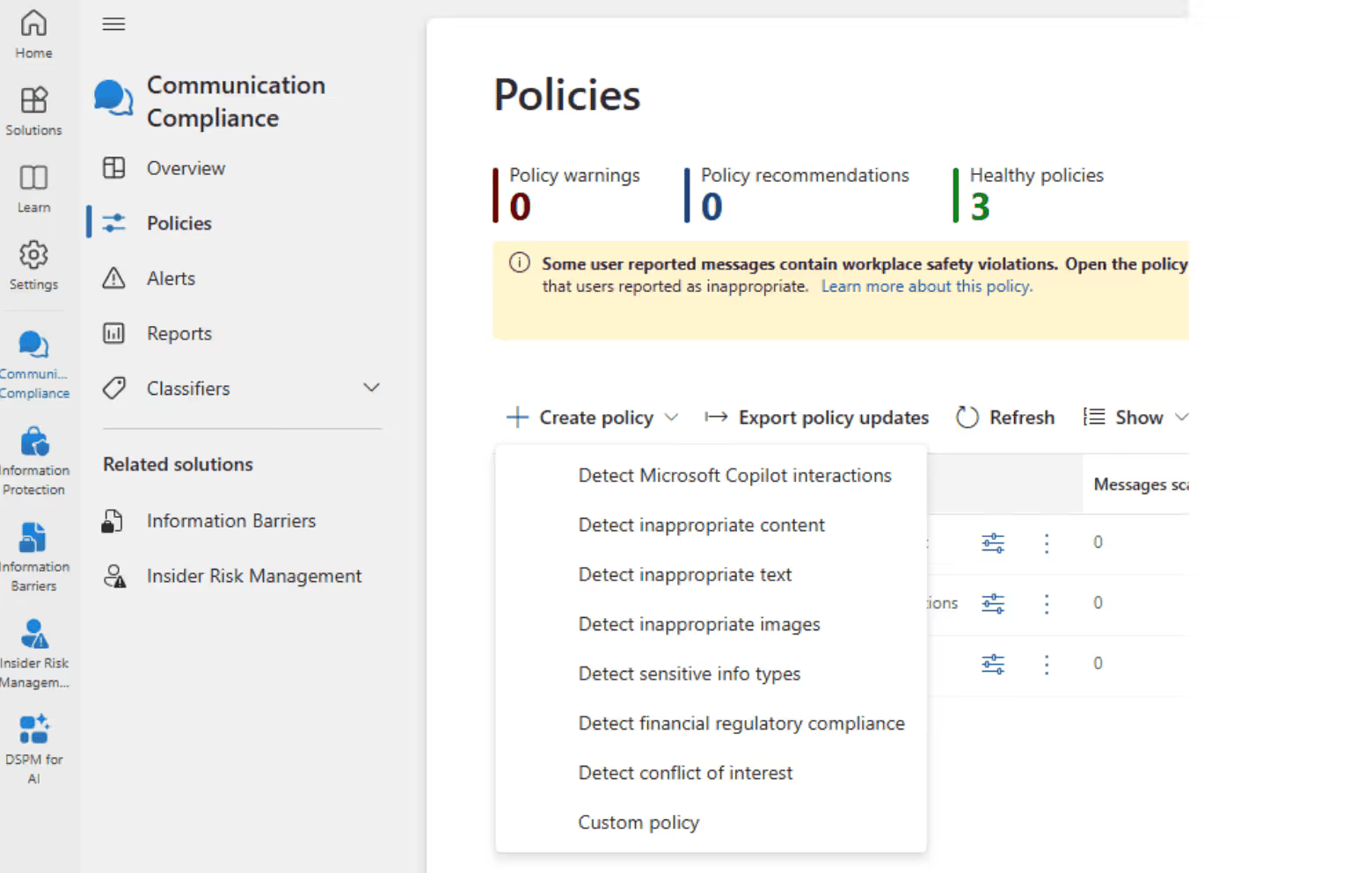

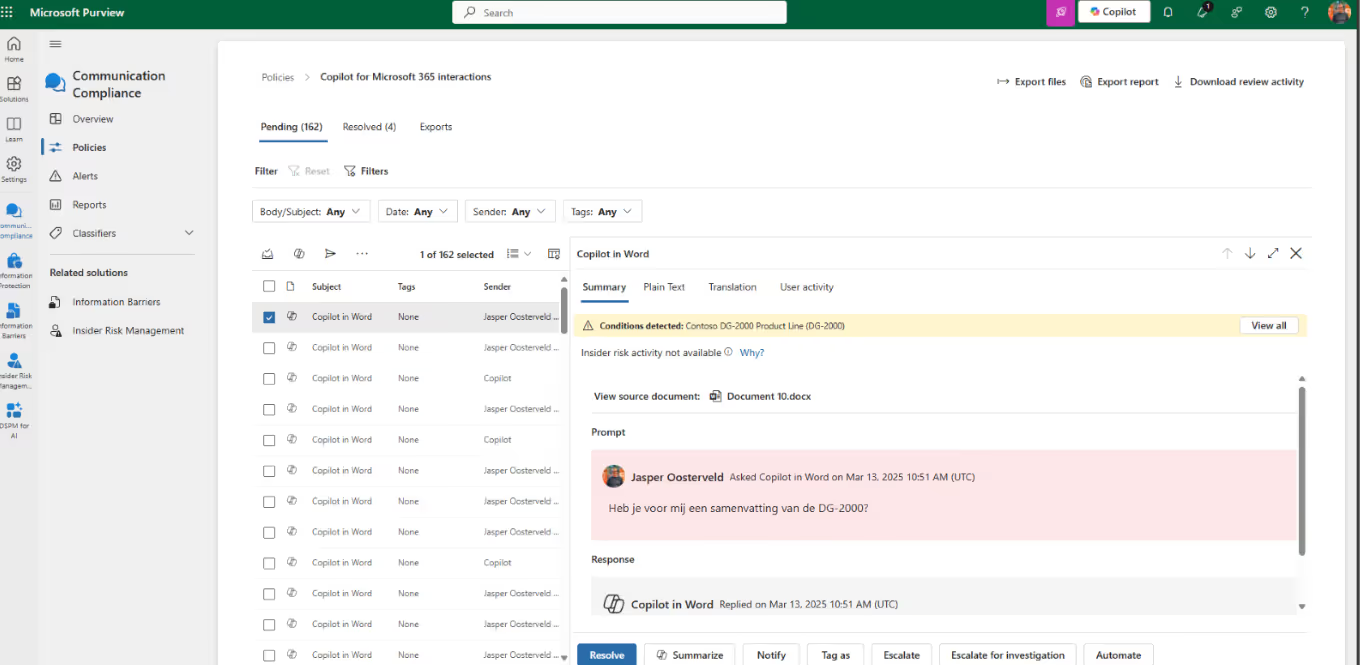

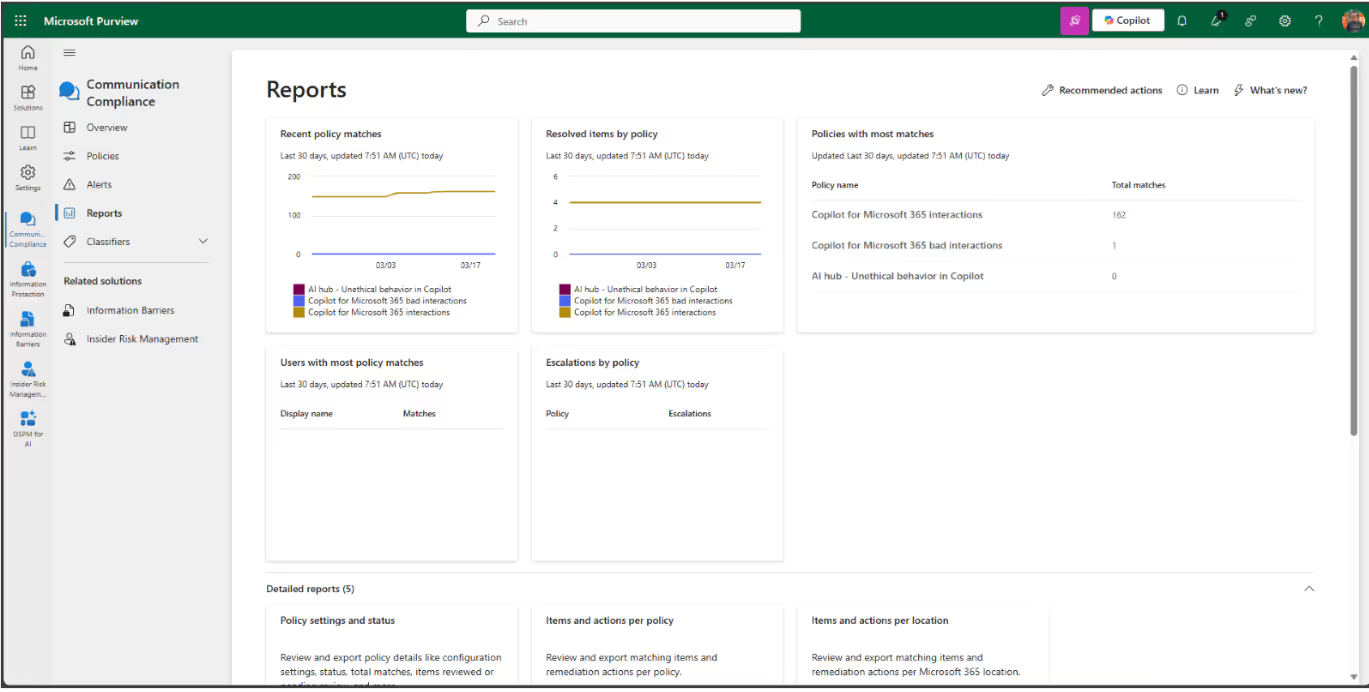

This one does exactly what the name says. It’s an insider risk solution that helps detect, capture, and act on potentially risky communication within your organization. It’s powerful and comes with a few default policies to get you started.

You can use this to monitor Copilot interactions—for example, to flag when sensitive information is included in a prompt.

The reports menu gives you a lot of valuable insights into what’s going on.

You can’t stop AI, but you can manage it

Generative AI isn’t going anywhere, and security challenges are only going to grow. But Microsoft 365 admins like you have powerful tools at your fingertips.

Want to dip your toe in the water before going all-in on Microsoft 365 Copilot? Try “Copilot for Work”—a free offering with functionality similar to ChatGPT that you can monitor using Purview. It’s a great way to test the waters without compromising data security.

And hey—if you’re using ShareGate, you’re already ahead of the curve. You’re enhancing security and supporting your Copilot journey by doing things like monitoring and managing oversharing and guest access, consolidating data across tenants to improve security (and cut costs!), and managing the lifecycle of Microsoft 365 workspaces with custom provisioning templates. That means stronger data quality, smarter collaboration, and more accurate information for Copilot to tap into.

.svg)

%20(1).avif)

.png)